100

Write a Twitter thread (X thread) about the very latest AI news, formatted as follows: 1. **First tweet (hook):** * Spark curiosity with a provocative question or surprising statement about AI today. * Tease that you'll share several must-know developments in the thread. * Keep it ≤280 characters and avoid hashtags. 2. **Subsequent tweets (one per news item):** For each: * **Headline/Context (concise):** A short phrase identifying the development (e.g., “Major breakthrough in multimodal models”). * **Key insight:** State the single most important takeaway or implication (“It can now generate lifelike videos from text prompts, potentially transforming content creation.”). * **Why it matters / curiosity angle:** A brief note on impact or a rhetorical question that encourages engagement (“Could this replace human editors?”). * **Brevity:** Stay within 280 characters total. * **Tone:** Informational yet conversational and shareable—use an emoji or casual phrasin

🧵 1/5

🧵 2/5

🧵 3/5

🧵 4/5

🧵 5/5

Sign Up To Try Advanced Features

Get more accurate answers with Super Pandi, upload files, personalized discovery feed, save searches and contribute to the PandiPedia.

Sorry, Pandi could not find an answer.

Let's look at alternatives:

- Modify the query.

- Start a new thread.

- Remove sources (if manually added).

Related Content From The Pandipedia

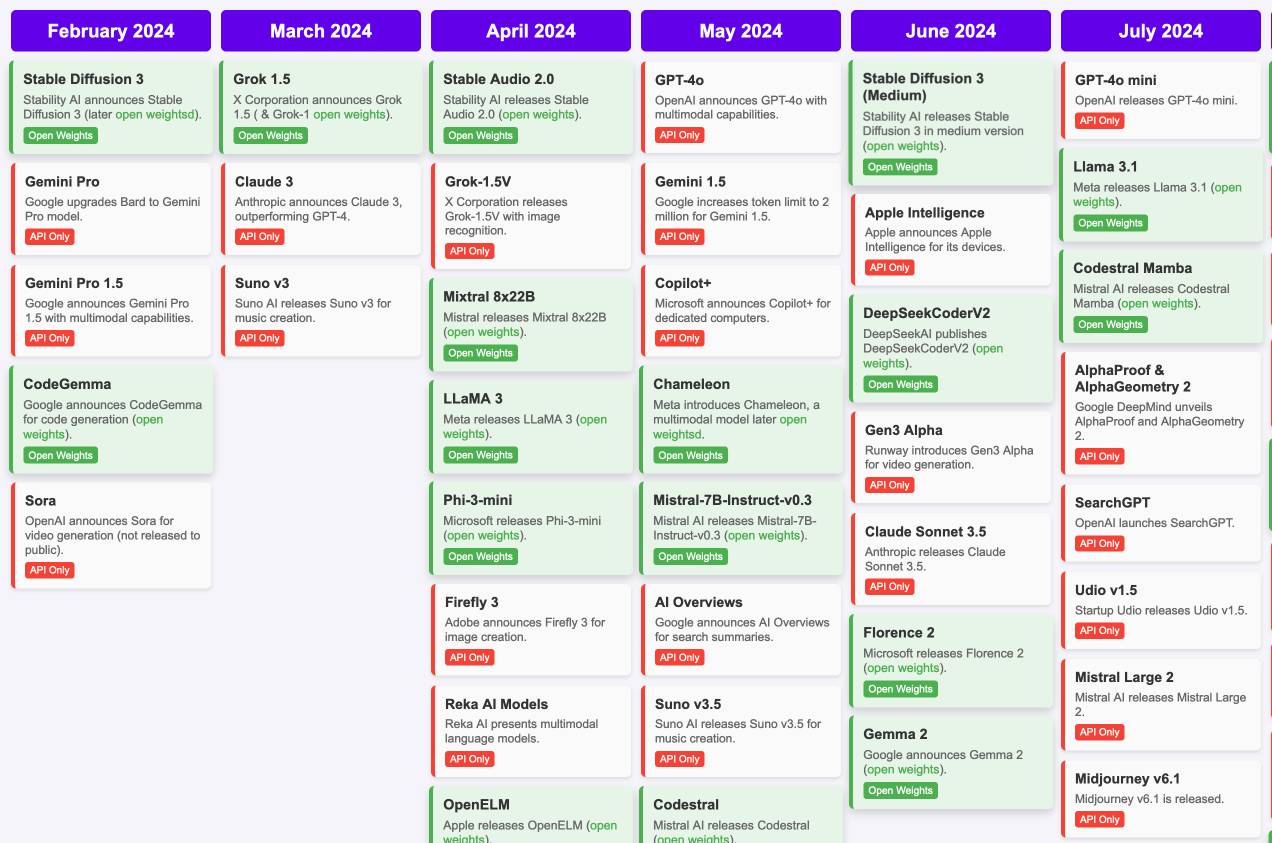

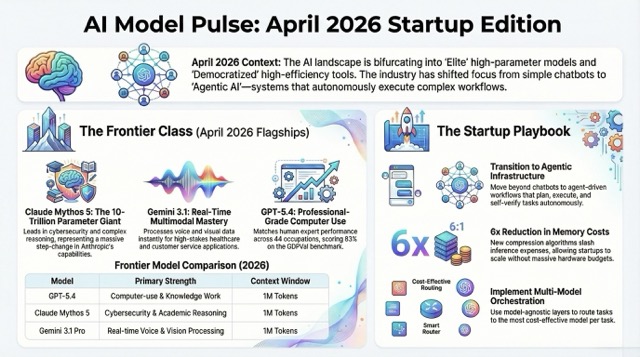

What’s changing in AI models right now?AI model releases are moving fastWrite a Twitter thread (X thread) about the very latest AI news, formatted as follows:

1. **First tweet (hook):**

* Spark curiosity with a provocative question or surprising statement about AI today.

* Tease that you'll share several must-know developments in the thread.

* Keep it ≤280 characters and avoid hashtags.

2. **Subsequent tweets (one per news item):** For each:

* **Headline/Context (concise):** A short phrase identifying the development (e.g., “Major breakthrough in multimodal models”).

* **Key insight:** State the single most important takeaway or implication (“It can now generate lifelike videos from text prompts, potentially transforming content creation.”).

* **Why it matters / curiosity angle:** A brief note on impact or a rhetorical question that encourages engagement (“Could this replace human editors?”).

* **Brevity:** Stay within 280 characters total.

* **Tone:** Informational yet conversational and shareable—use an emoji or casual phrasing if it fits, but avoid hashtags.

* **Optional source reference:** If possible, mention “According to \[source]” or “As reported by \[outlet] on \[date]” in as few words as feasible.

3. **Final tweet (call-to-action):**

* Invite replies or retweets (e.g., “Which of these AI advances surprises you most? Reply below!”).

* Keep it concise and avoid hashtags.

Additional notes:

* Assume access to up-to-date data; for each item, fetch or insert the date/source before writing.

* Ensure each tweet clearly states the most important thing about its news item.

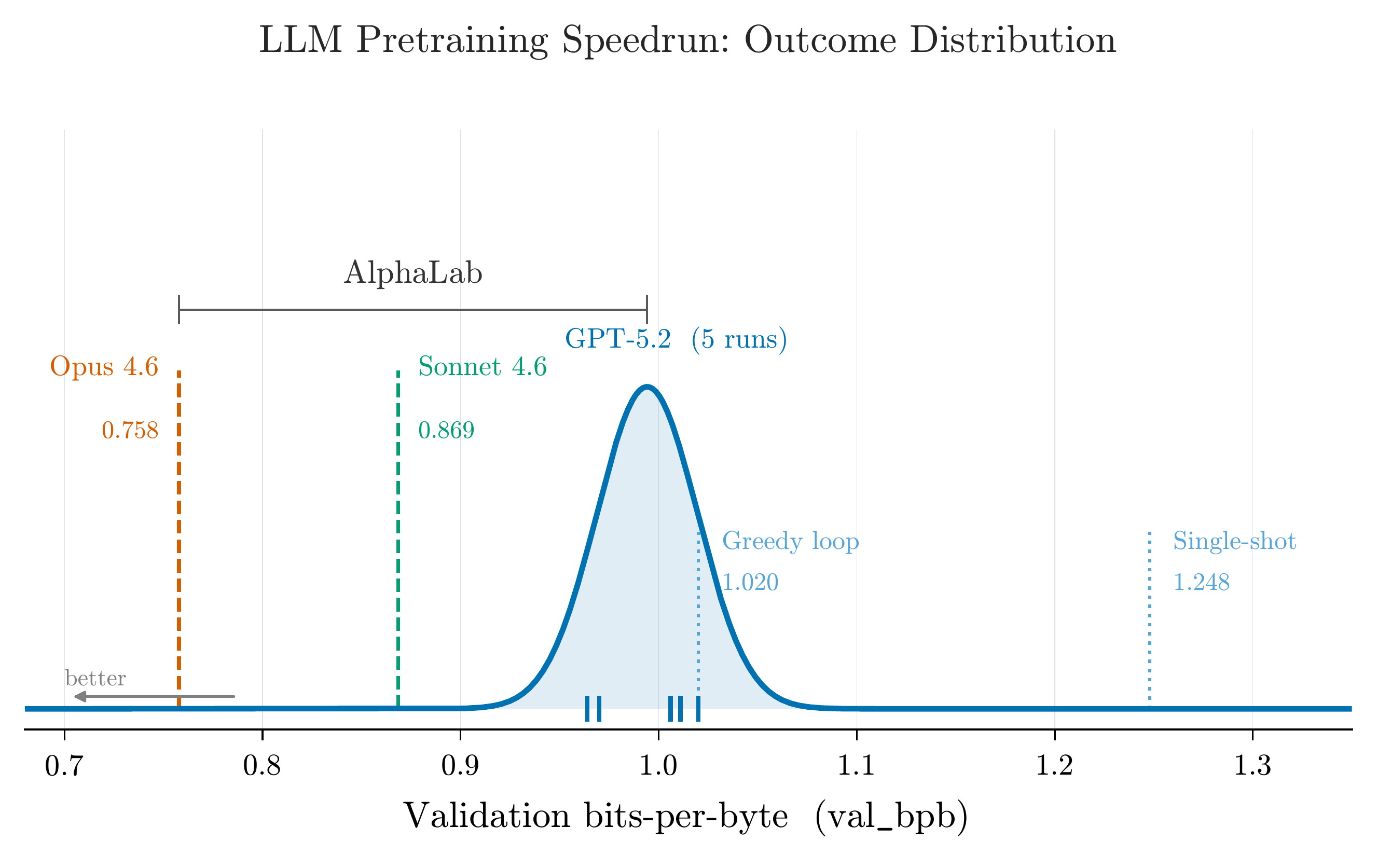

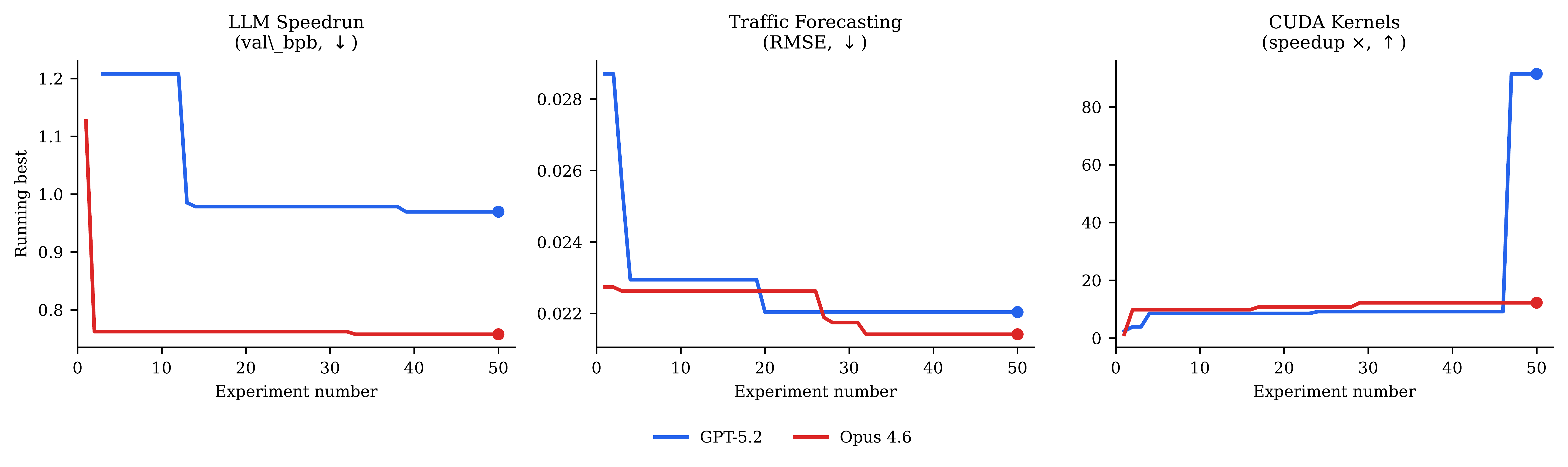

* Avoid hashtags altogether.What are the key features of OpenAI’s Deep Research tool, and how do they enhance user experience in professional and consumer contexts?What the latest AI news is really signalingAI’s policy and model shake-up just acceleratedAI’s newest moves: models, money, and safetyAI’s week of cyber models, regulations, and layoffsThe brief history of electric mobility in under two minutesAI’s newest battleground: models, agents, and infraAI’s newest moves: OpenAI, Cerebras, Google and AnthropicAI news is shifting from chat to actionOpenAI, Kimi, and the new AI efficiency raceHow can I improve my sleep quality?AI’s busiest week yet: models, agents, regulation