Tips and tricks for building AI agents with LLM

Building Effective LLM-Powered Search Agents: Practical Tips and Tricks

Search agents are one of the most impactful agentic applications because they combine large language models (LLMs) with web search, retrieval, tools, and iterative reasoning to answer complex, time-sensitive queries reliably. This report synthesizes current best practices across architecture, retrieval-augmented generation (RAG), tool use, evaluation, and safety to guide advanced practitioners building production-grade search agents[1][2].

You will find actionable guidance on agent architecture and control flow, RAG data and retrieval optimization, rigorous evaluation strategies, and risk and oversight patterns that together raise the reliability and transparency of search agents in real-world deployments[1][9][12][13].

Agent Architecture: Keep It Simple, Structured, and Observable

Start simple and expand gradually as confidence grows; overengineering early prototypes often hides failure modes and slows iteration[1]. Robust search agents rely on clean tool interfaces to web search, browsers, APIs, and data stores; explicit planning; persistent memory; and a controller that manages action selection and error handling[1][4].

Instrument for observability from day one: capture the full execution trace including prompts, tool calls and results, intermediate thoughts or plans, and decision rationales to enable debugging, evaluation, and safe operations, especially in multi-step or multi-agent workflows[2][13]. Track not just final task success but intermediate progress, such as whether the agent chose the right tool and formed well-structured API calls, so you can pinpoint breakdowns efficiently[5].

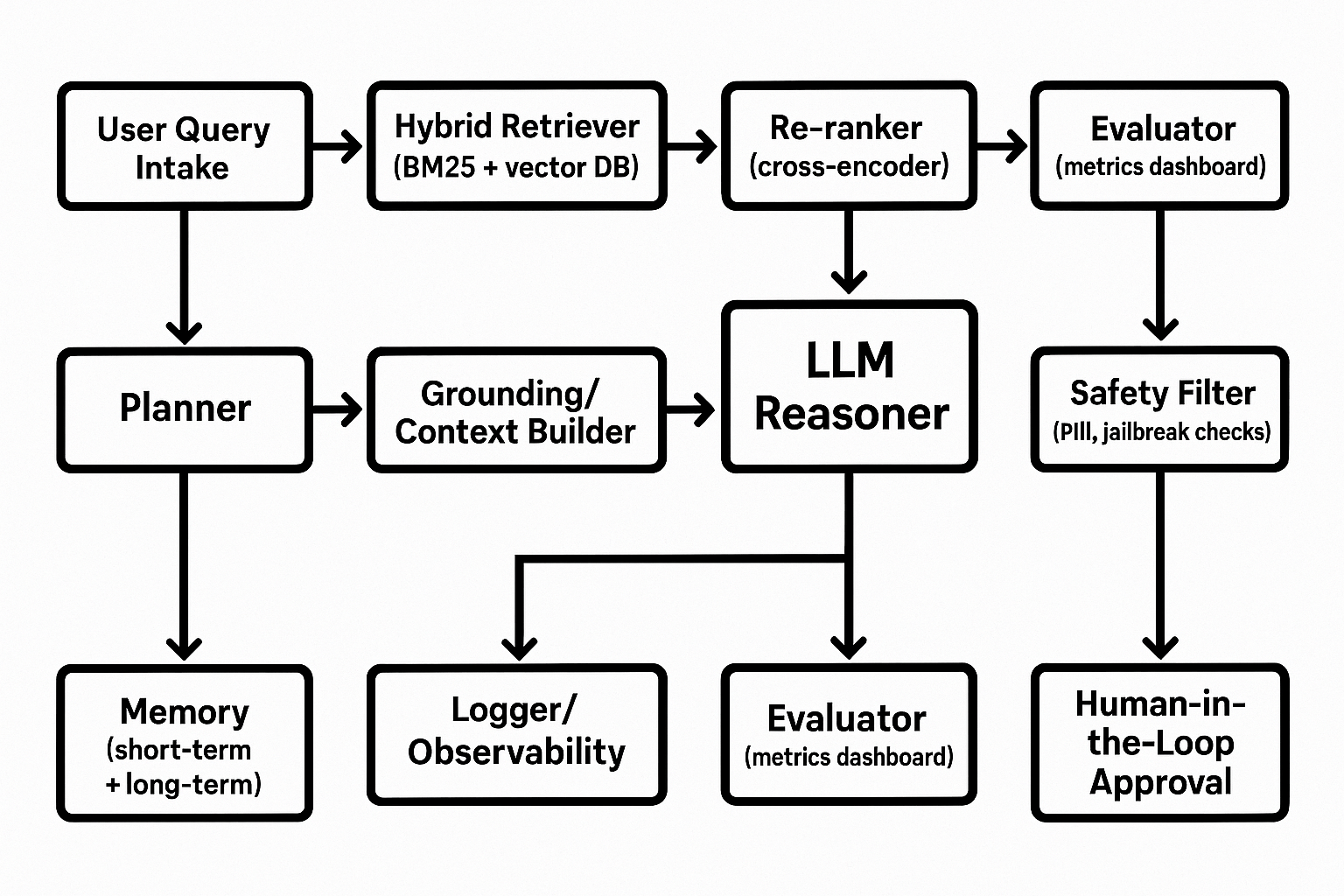

Conceptual Architecture of an LLM Search Agent

A block diagram showing user query intake, planner, hybrid retriever (BM25 + vector), re-ranker, grounding/context builder, LLM reasoning, tool calling (web search, browser, APIs), memory store, logger/observability, evaluator, safety filter, and human-in-the-loop approval.

- Planning and control: Explicitly decompose tasks, plan steps, and implement robust error handling and retries to reduce unpredictable or hallucinatory behavior

- Memory: Persist key facts and decisions across steps and sessions to avoid repeated work and maintain context

- Tools: Define tight schemas and least-privilege access for search, browsing, and API calls, with clear input/output contracts

- Observability: Log prompts, tool traces, and decisions for offline analysis, evaluation, and incident response

These measures reduce the chances of cascading errors in long interactions and improve the predictability of agent behavior in open-ended environments[4].

RAG as the Backbone of Search Agents

A high-performing search agent typically centers on a RAG pipeline tuned end to end: clear goals, pilot-based rollout, careful component selection, and continuous monitoring to balance performance, cost, and accuracy[7][8].

Data quality dominates outcomes: curate authoritative, current sources; clean and structure content; and attach rich metadata to improve grounding and answer quality[6][7][10].

- Chunking: Prioritize experimentation with chunk boundaries and overlap; chunking often yields larger gains than embedding tweaks

- Chunk size: Larger chunks can help but risk noise; tune to your corpus and tasks

- An example study found a 1024-token window with 128-token overlap to be optimal for its dataset

- Overlap: Include overlap between adjacent chunks to preserve context for downstream ranking and generation

These practices can significantly enhance retrieval quality and overall RAG performance when tuned against a representative benchmark for your domain[6][8].

- Hybrid retrieval: Combine keyword search (e.g., BM25) with vector similarity to maximize recall on varied user phrasing

- Re-ranking: Use a cross-encoder re-ranker to refine the top-k results at higher computational cost

- Vector store choice: Expect a speed-quality trade-off; reported comparisons show latency and retrieval quality differences across systems

In practice, hybrid queries mitigate vocabulary mismatch, while cross-encoder re-ranking improves context precision and recall at the expense of runtime; evaluations also show vector stores can differ on latency versus retrieval effectiveness, so benchmark with your data and requirements[11][6][8].

Tool Use Patterns for Search Agents

Augment LLMs with tools for web search, browsing, API calls, database queries, and code execution, and feed results back into the model's context for grounded reasoning and reduced hallucinations[12]. LLMs serve as natural-language interfaces between users and tools and can translate between structured tool schemas and human queries[12].

RAG adds an independent knowledge substrate such as vector databases, relational stores, or knowledge graphs to cross-verify and cite information for transparency and trust[12][15].

Document the full agent architecture and provide traceability of the agent's internal steps, tool calls, and interactions; publish complete execution traces in research and system reports to support reproducibility and audits[13].

Evaluation, Optimization, and Monitoring

Evaluate both the final outcome and intermediate decisions to identify where the agent succeeds or fails, and use rigorous benchmarks for planning, tool use, reflection, and memory in settings that approximate real applications[2][5].

For RAG-heavy search agents, adopt a multi-faceted evaluation: measure retrieval quality (e.g., precision@k, recall@k), generation quality (faithfulness/factuality, answer correctness and relevancy), and context precision; create a reference dataset with representative questions, ground-truth documents, and human-scored responses to anchor these metrics[8][9][6][7].

In production, continuously monitor interactions and logs to detect regressions, drift, and new failure modes, and couple online monitoring with periodic offline evaluations to maintain alignment with objectives[7].

Iterative optimization techniques like AgentOptimizer can improve agent skills by reviewing historical trajectories and applying strategies such as rollback and early-stop without changing base model weights, enabling safer and cheaper skill refinement over time[3].

When assessing agentic applications, simulate interactive conversations and carefully check each intermediate decision; for RAG, measure retrieval and generation steps separately to localize issues and accelerate fixes[16].

Safety, Security, and Oversight

Establish guardrails that limit excessive agency and scope of actions, with human-in-the-loop controls for high-risk operations; sandbox code execution, restrict credentials and permissions, and perform regular security reviews of plugins and external integrations[12][14].

Use local tools and data processing where feasible so sensitive data is never exposed to the LLM, which also reduces token usage; make user-facing transparency disclosures that the system is AI-driven and can produce errors[12][15].

For agent-based systems, clearly delineate internal LLM operations, external tool calls, and user/system interactions, and report complete execution traces to achieve behavior traceability and support responsible deployment[13].

Quick-Start Checklist for Advanced Search Agents

- Scoping: Define target tasks, success metrics, and acceptable latency/cost envelopes

- Architecture: Start simple with a planner-controller, tightly scoped tools, and full tracing

- RAG data: Curate high-quality, fresh sources with rich metadata; schedule updates

- Chunking: Experiment with size and overlap; validate on real queries

- Retrieval: Use hybrid BM25+vector; add cross-encoder re-ranking for precision-sensitive tasks

- Evaluation: Track retrieval and generation metrics plus intermediate action quality; build a reference set

- Optimization: Iterate with trace reviews and agent-level optimizers; monitor online and offline

- Safety: Enforce least privilege, sandboxing, and human approval for risky actions; ensure transparency

Each item reflects practices shown to improve reliability and maintainability of agentic systems and RAG pipelines in realistic settings[1][6][7][8][9][12][13].

Recommended Videos for Further Study

Deep Dives on Search Agents, RAG Optimization, and Agent Evaluation

Explore tutorials and conference talks that demonstrate end-to-end search agent architectures, hybrid retrieval and re-ranking, and rigorous evaluation of agentic systems with real-world datasets. Look for sessions that include execution traces, dataset construction, and metric dashboards.

Conclusion

High-quality search agents come from disciplined engineering: iterate from simple to sophisticated, build a transparent and controlled tool-using architecture, power it with a tuned RAG pipeline, and evaluate both intermediate actions and final answers. Pair hybrid retrieval with careful chunking and re-ranking, and enforce safety with least privilege, sandboxing, and human oversight. With strong observability, a robust reference dataset, and continuous optimization, you can sustain accuracy, trust, and agility as domains and user needs evolve[1][6][8][9][12][13].

References

Get more accurate answers with Super Pandi, upload files, personalized discovery feed, save searches and contribute to the PandiPedia.

Let's look at alternatives:

- Modify the query.

- Start a new thread.

- Remove sources (if manually added).