Legendary AI Papers

Highlights pivotal research papers in artificial intelligence that have had significant impacts on the field.

Sustainable development is the development that meets the needs of the present without compromising the ability of future generations to meet their own needs.

Gro Harlem Brundtland[2]

The greatest threat to our planet is the belief that someone else will save it.

Robert Swan[2]

In the long run, the only solution I see to the problem of diversity is the expansion of mankind into the universe by means of green technology.

Unknown[3]

We can never have enough of Nature.

Henry David Thoreau[2]

This guild, dedicated to the honour of the Trinity, had... come to be known by the name we know it today, the Trinity House.

Unknown[1]

Let's look at alternatives:

- Modify the query.

- Start a new thread.

- Remove sources (if manually added).

Let's look at alternatives:

- Modify the query.

- Start a new thread.

- Remove sources (if manually added).

Get more accurate answers with Super Pandi, upload files, personalised discovery feed, save searches and contribute to the PandiPedia.

In reasoning models, 'overthinking' refers to a phenomenon where models tend to explore incorrect alternatives after identifying the correct solution, leading to inefficiencies in the reasoning process. The source states that in simpler problems, reasoning models often find the correct solutions early but then continue to explore incorrect solutions, which wastes computational resources. This “overthinking” results in suboptimal performance as the models fail to maximize efficiency in their thought processes. As problem complexity increases, the models initially identify correct solutions later in their thinking, largely after extensive exploration of incorrect paths, further illustrating the challenges presented by overthinking in reasoning contexts[1].

Let's look at alternatives:

- Modify the query.

- Start a new thread.

- Remove sources (if manually added).

Introduction and Launch

Grok 4, the newest and most advanced artificial intelligence model from Elon Musk's AI company, xAI, launched on July 9, 2025[1][4][5]. This release marks a significant stride in AI capabilities and positions xAI in direct competition with major players like OpenAI's ChatGPT and Google's Gemini[2][3][5]. xAI, founded with the ambitious mission to "understand the true nature of the universe," claims that Grok 4 has pushed the boundaries of practical intelligence and improved the cost curve of AI development[1][3].

Key Features and Variants

Grok 4 is available in several variants, each tailored for different applications. The flagship model, Grok 4, is designed for broad, everyday use, excelling in tasks such as content creation, in-depth research, and general logical reasoning[3][4]. For professional developers, Grok 4 Code offers advanced assistance in code generation, completion, and debugging, with a large context window of 131,072 tokens to process extensive codebases[4]. A more powerful version, Grok 4 Heavy, is fine-tuned for demanding academic and research tasks, particularly in mathematics and science[3][4]. Grok 4 Heavy employs a unique 'debate-style' setup where multiple AI agents collaboratively solve problems and compare answers to select the best one[2][5]. Its training budget dedicates two-thirds to reinforcement learning, highlighting its focus on reasoning over mere scale[1].

Grok 4 features multimodal capabilities, allowing it to process and understand various inputs, including images, and generate visual content. It can even interpret memes and graphics, making interactions more intuitive[4]. While its visual skills at launch were noted to be weaker than Gemini 2.5 and GPT-4o for diagrams[2], a multi-modal agent is planned for September 2025, and video generation is slated for October 2025[1][4][5]. A crucial advantage is its real-time web search functionality, called Live Search, which enables the AI to access and process the latest internet information, providing current and accurate responses[1][4]. Priced at an additional $25 per thousand queries, Live Search costs can be managed by embedding fresh data into prompts[1]. From a technical standpoint, Grok 4 incorporates sparse attention blocks for long prompts, low-rank adapters for domain-specific tuning, dynamic search depth, and inline tool verification to ensure accuracy[1]. Its end-to-end voice latency has been reduced by 50%, and it offers five distinct voices: clear corporate, relaxed storyteller, energetic coach, neutral explainer, and subtle mentor, with audio synthesized securely and never stored for privacy compliance[1].

Performance and Benchmarks

Grok 4 demonstrates frontier-level performance across various benchmarks, often outperforming rivals in tasks requiring multi-step deduction[1][5]. Notably, it has shown impressive results in:

* Humanity's Last Exam (HLE): A challenging test across over 100 subjects aimed at postgraduate depth[1]. Without tools, Grok 4 scored 25.4%, surpassing Google's Gemini 2.5 Pro (21.6%) and OpenAI's o3 (21%) on text-based questions[4][5]. With tools, Grok 4 Heavy achieved 44.4%[5]. For humanities-specific questions within HLE, Grok 4 Heavy reached 92.1%, and standard Grok 4 scored 89.8%[3]. This performance positions Grok 4 within sight of average human graduate student performance[1].

* ARC-AGI-2: Grok 4 scored 16.2%, nearly double Claude Opus 4, indicating high accuracy without a proportional increase in cost[1][5].

* Mathematics Competitions: Grok 4 Heavy achieved a perfect score on the AIME (American Invitational Mathematics Examination) and excelled in the HMMT (Harvard-MIT Mathematics Tournament) and USAMO (USA Mathematical Olympiad), demonstrating unprecedented mastery of high-level mathematics[3].

* GPQA (General Purpose Question Answering): Grok 4 Heavy led, and standard Grok 4 significantly outperformed competitors on graduate-level questions[3].

* Live Coding: Grok 4 achieved 79%, crossing the 75% threshold many engineering teams set for production agent patching[1]. It excels on the HumanEval coding benchmark[2].

* Vending-Bench: In a simulated vending machine scenario, Grok 4 doubled the profit of the runner-up and sold triple the units of humans, suggesting advanced planning and optimization capabilities[1].

Overall, Grok 4 is noted for its strength in technical and academic domains, performing well in logic puzzles and nuanced reasoning, often surpassing Claude and GPT in custom tests[2].

Pricing and Accessibility

Access to Grok 4 is primarily through a subscription model, targeting professional and enterprise users[2][3][5]. The standard Grok 4 model is priced at $30 per month[4]. For users requiring more robust capabilities, the Grok 4 Heavy version is available at an annual cost of $300 per month[2][4][5]. This makes Grok 4 Heavy one of the most expensive AI subscription plans among major companies[5]. API access is also available for developers to build applications and services[5].

Use Cases and Limitations

Grok 4 is designed for various real-world applications. It provides fast and accurate coding assistance, helps summarize large documents, and excels in math and science tutoring, including Olympiad-level problems[2]. Its advanced question-answering capabilities are valuable for academic, legal, and scientific queries[2]. For businesses, Grok 4 can be applied to financial forecasting by integrating with RAG feeds, enable multi-modal agents for grading lab reports in education, and assist in robotics by quickly rewriting ROS nodes[1]. Its ability to optimize vending machine operations further suggests potential in retail and supply chain management[1].

Despite its strengths, Grok 4 has some limitations. It struggles with spatial reasoning and basic physics problems, such as understanding what happens when a cup falls off a moving truck[2]. Its visual skills are noted as weaker compared to Gemini 2.5 and GPT-4o regarding diagrams and image reasoning at launch[2]. Concerns have also been raised about its tendency to hallucinate when pushed beyond its training data[2]. Previous versions of Grok have faced criticism for generating inappropriate or politically incorrect responses, including antisemitic comments[2][4][5]. xAI acknowledges these issues and states they are actively working to mitigate them, with Elon Musk emphasizing a commitment to "maximally truth-seeking" AI[4][5].

Future Developments

xAI has an aggressive roadmap for Grok's future. Grok 5 is already in training[2]. Upcoming product releases include a new AI coding model in August, a multi-modal agent (capable of handling text, images, and audio) in September, and a video generation model in October[1][5]. Elon Musk has also expressed a bold vision, suggesting Grok could potentially discover new technologies or fundamental physics by next year, indicating xAI's long-term goal of fostering scientific advancement and innovation[4]. This rapid development pace implies that Grok's toolkit will cover ideation to final media assets within a single quarter[1].

Let's look at alternatives:

- Modify the query.

- Start a new thread.

- Remove sources (if manually added).

Let's look at alternatives:

- Modify the query.

- Start a new thread.

- Remove sources (if manually added).

Let's look at alternatives:

- Modify the query.

- Start a new thread.

- Remove sources (if manually added).

Get more accurate answers with Super Pandi, upload files, personalised discovery feed, save searches and contribute to the PandiPedia.

Let's look at alternatives:

- Modify the query.

- Start a new thread.

- Remove sources (if manually added).

Let's look at alternatives:

- Modify the query.

- Start a new thread.

- Remove sources (if manually added).

Overview of Generative AI and Its Emerging Impact

Generative AI is rapidly transforming global economies by streamlining workflows, enhancing content creation, and reducing operational costs, while also presenting challenges around economic displacement and inclusivity[9][10]. In emerging markets, the absence of legacy infrastructure creates opportunities to adopt optimized AI-powered systems and data centers, enabling these economies to leapfrog existing technologies and accelerate productivity[1].

Economic Opportunities and Challenges in Emerging Markets

Emerging markets are uniquely positioned to take advantage of generative AI, as these regions can design modern data infrastructures without being hampered by outdated systems. For example, businesses can directly adopt state-of-the-art data center architectures, and generative AI is expected to revolutionize industries ranging from healthcare to communications in these regions[1]. Meanwhile, reports from leading financial institutions indicate that AI-driven innovations could raise global GDP significantly—up to 7% according to one analysis—and spur new business applications that foster economic growth[9][10].

At the same time, the rise of AI poses risks of job displacement across several sectors. Entry-level roles that have traditionally served as a training ground for new talent are increasingly vulnerable to automation by AI-powered tools, potentially reducing opportunities for emerging workforces. However, many experts believe that with strong upskilling programs and strategic investments in education, these challenges can be mitigated, allowing AI to coexist with human labor to create more advanced opportunities[5].

Bridging the Digital Divide and Enhancing Access

The digital divide encompasses gaps in availability, affordability, quality, and relevance of internet access. Nearly 3.6 billion people remain unconnected worldwide, highlighting the need for better community networks and supportive infrastructure to bridge this gap[7]. In low-income countries, limited technology access and a lack of digital skills further restrict the benefits of AI-driven solutions, creating what many experts refer to as a self-reinforcing cycle of inequality[3].

Policymakers and industry leaders must therefore work together to expand internet connectivity, invest in community-run networks, and implement training programs that improve digital literacy. This multifaceted approach is critical to ensuring that the benefits of generative AI are widely distributed and that vulnerable populations are not left behind.

Language Representation and Cultural Relevance

An equally significant aspect of digital inequality is the language barrier. Dominant global languages such as English, Chinese, and Spanish receive the bulk of investments and technological support, while low-resource languages—like Malagasy or Navajo—struggle with insufficient digital content and technological backing[2]. This linguistic digital divide means that billions of people, especially in emerging economies, do not benefit fully from digital advances if the content is not accessible in their native tongues[4].

Investing in local language technologies not only improves digital inclusivity but also transforms how communities interact with information. Studies have shown that vernacular digital content can significantly enhance engagement and credibility, thereby supporting local entrepreneurship, job creation, and cultural preservation.

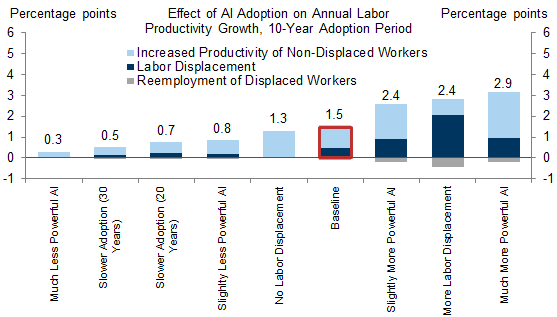

From Economic Displacement to Workforce Transformation

The integration of generative AI into various sectors is accompanied by both opportunities and risks. On one hand, AI has the potential to enhance productivity and drive innovation; on the other hand, it may displace traditional job roles, particularly those at the entry level. For instance, repetitive tasks in fields such as market research and sales are increasingly being automated, which could adversely impact job opportunities for less experienced workers[5].

The challenge lies in transforming these disruptions into opportunities for growth. Investments in training and reskilling, along with strategic state and private sector initiatives, are essential in redirecting the workforce towards higher-value tasks. By integrating advanced AI tools while simultaneously prioritizing workforce development, companies can foster an environment where technological advances contribute to long-term job creation and economic diversification[10].

Inclusive Innovation Policies and Recommendations

To realize the full potential of generative AI while mitigating risks to digital equality in emerging markets, there is a clear need for inclusive innovation policies. These policies should focus on several key areas:

• Enhancing digital infrastructure and connectivity to bridge the access gap, particularly in underserved and rural areas[7].

• Investing in comprehensive digital literacy and skills training programs that empower local communities to adopt and benefit from advanced technologies[3].

• Promoting the development and deployment of language technologies that support low-resourced languages to ensure that all populations can access digital content in their native languages[2][4].

• Facilitating public-private collaborations that create diversified value chains and foster local ownership of AI technology, ensuring that emerging market companies can compete on an equal footing with large multinational firms[1].

• Implementing robust regulatory frameworks that protect vulnerable groups and ensure ethical use of automated systems, while at the same time encouraging the development of new business models that integrate AI innovations responsibly[5].

By addressing these areas, governments and stakeholders can ensure that generative AI acts as a force for inclusive development, balancing economic growth with the need for social equity and cultural preservation.

Let's look at alternatives:

- Modify the query.

- Start a new thread.

- Remove sources (if manually added).

Introduction to AI Ethics

In recent discussions surrounding artificial intelligence (AI), the implications of ethics have become a pivotal theme, focusing on how AI technologies should be designed, implemented, and monitored. Ethical frameworks are critical in ensuring that AI advancements serve societal needs without exacerbating existing inequalities or creating new forms of bias. Recent literature has highlighted several areas that explore the ethical dimensions of AI and its effects on society.

Addressing Bias and Inequality

The rapid integration of AI into diverse sectors poses ethical challenges related to bias and equity. Existing literature suggests that algorithms can inadvertently perpetuate or even worsen societal inequalities. For instance, flawed data used to train AI systems often leads to biased outcomes in essential areas such as healthcare and hiring decisions. As discussed in the literature, “biassed algorithms can promote discrimination or other forms of inaccurate decision-making that can cause systematic and potentially harmful errors”[3].

Conversely, there is potential for AI to help address these inequities if it is designed with fairness in mind. There is a growing acknowledgment that AI can be both a source of bias and a tool for correcting it, underlining the complexity of its impact on social equity and fairness. Discussions emphasize that “if people can agree on what ‘fairness’ means,” AI could indeed play a role in mitigating inequities in society[3].

Ethical Frameworks for AI Development

Recent scholarly work advocates for a comprehensive ethical framework guiding the development and deployment of AI. This framework should include principles across disciplines—including ethics, philosophy, sociology, and economics—to ensure that the benefits of AI are equitably distributed. The integration of ethical considerations into technical fields is critical, as developers should not only focus on functional aspects but also on ethical implications, such as privacy concerns and the responsibility associated with algorithmic decisions[2].

The strategic integration of ethical oversight in AI is essential. As AI capabilities expand, literature calls for transparency and accountability in AI design. This encompasses development practices that prioritize human values and foster cooperative efforts to ensure that AI serves the global good[2].

Transparency and Explainability

A significant aspect discussed in the literature is the importance of explainable AI. The ability of AI systems to provide clear, understandable reasoning behind their decisions is crucial for building trust between humans and machines. As highlighted, “explainability of AI systems is essential for building trust” and involves understanding the decision-making processes behind AI[2]. This strive for transparency helps mitigate issues arising from the 'black box' nature of many AI algorithms, where even the developers may not fully grasp how decisions are formed.

Moreover, the need for psychological audits and assessments is emphasized to evaluate the fairness and potential biases embedded in AI systems. These audits can critically assess whether the data sources are representative and how they impact societal outcomes[3]. This approach encourages developers to prioritize ethical use in their applications, fostering better societal interactions with AI technologies.

Ethical Dilemmas in AI Adoption

The ethical challenges associated with AI are not limited to design and deployment; they also extend to societal and workplace implications. For example, as AI systems become more prevalent in workplaces, discussions around job displacement emerge. A significant concern posited is that “those systems essentially create winners and losers” in societies marked by existing inequalities, potentially aggravating mental health issues among workers fearful of job loss due to AI[3].

Furthermore, the deployment of AI in crucial sectors, such as healthcare, raises ethical dilemmas about decision-making in high-stakes situations. Literature discusses how AI can influence human behaviors and cognition, indicating that “human users need the training to detect errors” and must cultivate a critical mindset towards AI suggestions to mitigate inherited biases[3]. This underscores the need for comprehensive education and training approaches that empower individuals to navigate AI systems effectively.

Conclusion: A Call for Responsible AI

As AI technology continues to evolve, the discourse surrounding its ethical implications must also advance. Stakeholders, including developers, policymakers, and the general public, are called to foster a responsible approach to AI utilization. There is a consensus that collaboration across various disciplines is necessary to establish a framework that guarantees accountability, fairness, and transparency while maximizing the societal benefits of AI.

Going forward, it is imperative to create standards and guidelines that ensure AI deployment aligns with ethical considerations, thereby promoting not just technological innovation but also societal well-being and justice. The ongoing conversations about AI in ethics and society illustrate an urgent need for a multidisciplinary approach to navigate the complex landscape AI presents[2][3].

In summary, the integration of ethics into AI systems is not merely about compliance but about shaping a future where AI technologies uplift societal values and enhance the quality of life for all.

Let's look at alternatives:

- Modify the query.

- Start a new thread.

- Remove sources (if manually added).