Deepfakes in Geopolitical Propaganda: Case Studies, Strategic Analysis, and Countermeasures

Introduction

Recent advances in artificial intelligence have enabled the rapid production of highly realistic synthetic media, commonly known as deepfakes. These deepfakes—spanning manipulated images, audio, and video—have evolved into tools that can be exploited not only for entertainment and commercial purposes but also for more nefarious state-sponsored propaganda and geopolitical manipulation[1][2]. The proliferation of these technologies has prompted detailed analyses regarding strategic objectives, detection innovations, and policy responses aimed at countering the misuse of deepfakes in the political arena.

Documented Incidents and Case Studies

Several documented incidents illustrate the diverse applications of deepfakes in geopolitical propaganda. For instance, a widely circulated AI-generated image of Pope Francis wearing a puffer coat and sexually explicit images of high-profile figures such as Taylor Swift have provoked widespread confusion and alarm, highlighting the capability of deepfakes to spread disinformation rapidly[1][6]. Moreover, state-sponsored initiatives have given rise to institutional deepfake use. Chinese state media, for example, has experimented with AI-generated news anchors, signaling a deliberate move to integrate deepfakes into routine propaganda operations[2]. These cases are not isolated; rather, the incidents underscore a pattern in which deepfakes are employed to undermine trust in traditional media and political discourse, thereby influencing public perception and international narratives.

Strategic Objectives in Political Propaganda

The strategic objectives behind state-sponsored deepfake operations are multifaceted. Deepfakes are intended to sow discord, manipulate voter behavior, and create doubts about the authenticity of media evidence. By producing convincingly realistic but entirely fabricated content, state actors seek to erode public confidence in established news sources and governmental institutions. For example, the misuse of synthetic media in election contexts aims to disrupt collective agenda formation and to destabilize the legitimacy of electoral processes[4]. These propaganda campaigns frequently aim to delegitimize political candidates and erode trust in democratic practices, ultimately creating an environment where factual accuracy is undermined in favor of competing narratives.

Detection and Technological Countermeasures

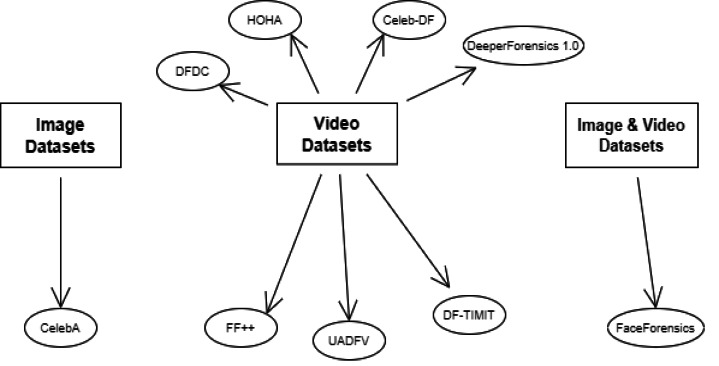

Technological solutions form a critical part of the countermeasures against deepfakes. Detection methods focus on identifying inherent signals produced during AI synthesis. For instance, forensic analyses reveal that deepfake videos often contain repeated patterns and unnatural artifacts—such as identical gestures, constant background positioning, and anomalous voice modulations—that are not typically exhibited in genuine human interactions[8]. Tools utilizing spectral artifact analysis and context-based behavioral analysis are already employed to flag suspicious content in automated workflows. However, these techniques face challenges when deepfakes are seamlessly integrated in real-time communications. Meanwhile, watermarking has been investigated as a potential technique, although recent research suggests that robust watermarking is difficult to achieve under determined adversarial conditions[8].

Diplomatic and Regulatory Responses

On the diplomatic front, governments and international entities have increasingly recognized the threat posed by deepfakes to national security and electoral integrity. Legislative efforts in several countries have been initiated to criminalize the malicious creation and distribution of deepfakes, particularly those aiming to interfere with elections or incite violence. For example, several U.S. states have passed laws targeting deepfake pornography and election interference, while federal proposals such as the DEEPFAKES Accountability Act seek to impose liability on creators and disseminators of harmful deepfakes[6]. Similarly, comprehensive policy proposals outlined in the arXiv report call for systemic regulation along the deepfake supply chain—extending responsibility to model developers, providers, and compute service operators to diminish the ease of deepfake production[5].

Policy Recommendations and Future Directions

Synthesis of the available research and policy proposals suggests several key recommendations for addressing the challenges posed by state-sponsored deepfakes. First, transparency measures such as mandatory labeling of synthetic media in political advertisements are essential to maintain public trust and to prevent deceptive practices from influencing the electorate. Lawmakers at both state and federal levels should mandate clear disclaimers on AI-generated content, especially those disseminated near elections[11]. Second, comprehensive regulatory frameworks should extend liability along the entire deepfake supply chain. This approach would hold developers, model providers, and distributors accountable, thereby reducing the avenues available to malicious actors without stifling legitimate research and artistic expression[5]. Third, governments should invest in and further deploy advanced detection technologies that leverage both machine-based analyses and human oversight. Encouraging collaboration between technological innovators, regulatory bodies, and international allies would enhance the detection capabilities, ensuring timely removal or labeling of harmful content[8]. Lastly, diplomatic initiatives should focus on establishing international standards and cooperative measures to combat deepfake-driven propaganda, since these challenges are transnational by nature. Such cooperation would involve sharing best practices, harmonizing legal frameworks, and enhancing cross-border investigative capacities[10].

Get more accurate answers with Super Pandi, upload files, personalized discovery feed, save searches and contribute to the PandiPedia.

Let's look at alternatives:

- Modify the query.

- Start a new thread.

- Remove sources (if manually added).