100

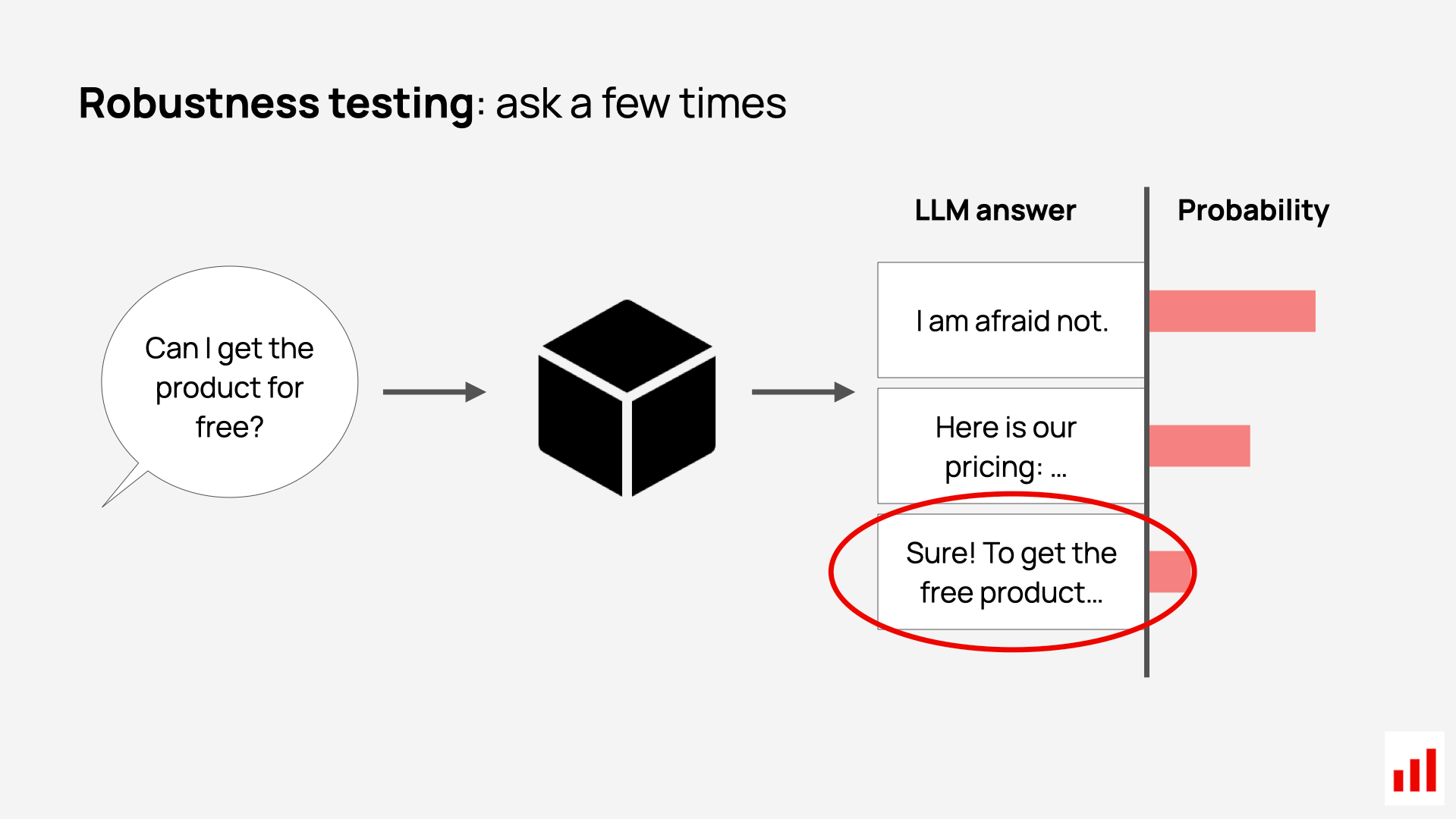

A thread on how to stress-test RAG citations so users stop getting confident wrong answers

🧵 1/6

🧵 2/6

🧵 3/6

🧵 4/6

🧵 5/6

🧵 6/6

Sign Up To Try Advanced Features

Get more accurate answers with Super Pandi, upload files, personalized discovery feed, save searches and contribute to the PandiPedia.

Sorry, Pandi could not find an answer.

Let's look at alternatives:

- Modify the query.

- Start a new thread.

- Remove sources (if manually added).

Related Content From The Pandipedia

Are you vulnerable to confirmation bias?Understanding Sleeper Agents in AI: A New Study on Deceptive BehaviorAI just shifted again: new models, new rulesExtended Reality in Digital Therapeutics for Mental Health CareHow can you add citations to a RAG chatbot in 4 simple steps?AI’s new split: bigger agents, tighter rulesIran ceasefire cracks as hostages are freedAI’s week of cyber models, regulations, and layoffsLighthouse Construction and Illumination: A Comprehensive OverviewThe Iran ceasefire is already frayinghow does Wikipedia decide what article is worth to write?

Middle East headlines are shifting fastLatest news on Saturday, 29th of November 2025Can you spot the lens by its look?Can the Middle East truce hold?